It was solely 11 months in the past that I wrote an Article entitled Nvidia In the Valley; the event was one more plummet of their inventory value:

To say that the firm has turned issues round is, for sure, an understatement:

That huge bounce in May was Nvidia’s final earnings, when the firm shocked buyers with an extremely formidable forecast; this final week Nvidia vastly exceeded these expectations and forecasted even larger progress going ahead. From the Wall Street Journal:

Chip maker Nvidia mentioned income in its just lately accomplished quarter greater than doubled from a 12 months in the past, setting a brand new firm document, and projected that surging curiosity in synthetic intelligence is propelling its enterprise sooner than anticipated. Nvidia is at the coronary heart of the growth in synthetic intelligence that made it a $1 trillion firm this 12 months, and it’s forecasting progress that outpaces even the most bullish analyst projections.

Nvidia’s inventory, already the high performer in the S&P 500 this 12 months, rose 7.5% following the outcomes, which might be about $87 billion in market worth. The firm mentioned income greater than doubled in its fiscal second quarter to about $13.5 billion, far forward of Wall Street forecasts in a FactSet survey. Even extra strikingly, it mentioned income in its present quarter can be round $16 billion, besting expectations by about $3.5 billion. Net revenue for the firm’s second quarter was $6.19 billion, additionally surpassing forecasts.

The outcomes present a wave of funding in synthetic intelligence that started late final 12 months with the arrival of OpenAI’s ChatGPT language-generation software is gaining steam as corporations and governments search to harness its energy in enterprise and on a regular basis life. Many corporations see AI as indispensable to their future progress and are making massive investments in computing infrastructure to help it.

Now the huge query on everybody’s thoughts is that if Nvidia is the new Cisco:

I don’t assume so, a minimum of by way of the near-term: there are some elementary variations between Nvidia and Cisco which are price teasing out. The larger query is the long run, and right here the comparability is likely to be extra apt.

Nvidia and Cisco

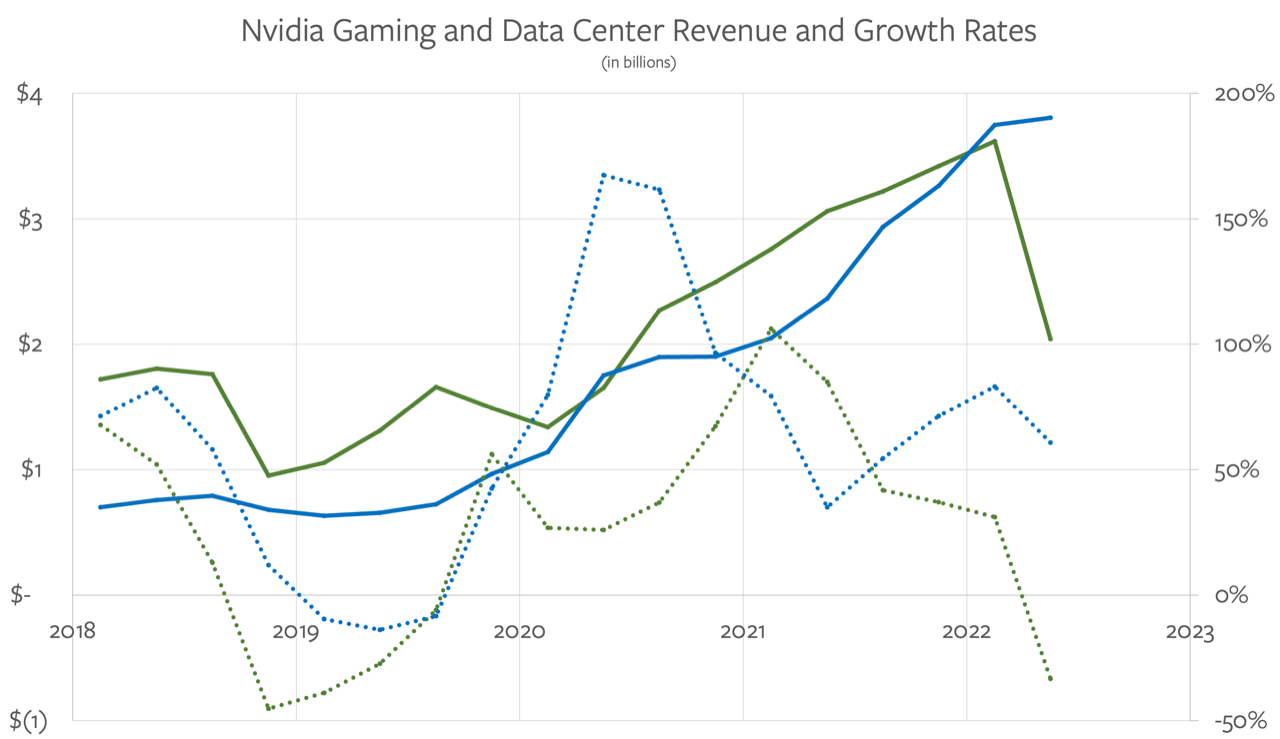

The first distinction between Nvidia and Cisco is in the above charts: Nvidia already went by a crash, because of the double whammy of Ethereum shifting to proof-of-stake and the COVID cliff by way of PC gross sales; each left Nvidia with enormous quantities of stock it needed to write-off over the second half of final 12 months. The shiny spot for Nvidia was the regular progress of knowledge heart income, because of the improve of machine studying workloads; I included this chart in that Article final fall:

What has occurred over the final two quarters is that knowledge heart income is devouring the remainder of the firm; right here is an up to date model of that very same chart:

Here is Nvidia’s income combine:

This dramatic shift in Nvidia’s enterprise gives some fascinating contrasts to Cisco’s dot-com run-up. First, right here was Cisco’s income, gross revenue, internet revenue, and inventory value in the ten years ranging from its 1993 IPO:

Here is Nvidia’s final ten years:

The very first thing to notice is the extent to which Nvidia’s crash final 12 months appears just like Cisco’s dot-com crash: in each circumstances regular however steep income will increase initially outpaced the inventory value, which finally overshot just some quarters earlier than huge stock write-downs led to huge decreases in profitability (rating one for crypto optimists hopeful that the present doldrums are merely their very own dot-com hangover).

Cisco, although, didn’t have a second act, not like this knowledge heart explosion. What is notable is the extent to which Nvidia’s income improve is matching the slope of the inventory value improve (clearly that is inexact given the totally different axis); it appears possible that the inventory will overshoot income progress quickly sufficient, however it hasn’t actually occurred but. It’s additionally price noting how rather more disciplined Nvidia seems to be by way of below-the-line prices: its internet revenue is shifting in live performance with its income, not like Cisco in the 90s; I think it is a operate of Nvidia being a a lot bigger and extra mature firm.

Another distinction is the nature of Nvidia’s prospects: over 50% of the firm’s Q2 income got here from the massive cloud service suppliers, adopted by massive shopper Internet corporations (i.e. Meta). This class does, after all, embrace the startups that after may need bought Cisco routers and Sun servers immediately, and now hire capability (if they’ll get it); cloud suppliers, although, monetize their {hardware} instantly, which is nice for Nvidia.

Still, there is a crucial distinction from different cloud workloads: beforehand a brand new firm or line of enterprise solely ramped their cloud utilization with utilization, which must correlate to buyer acquisition, if not income. Model coaching, although, is an up-front price, not dissimilar to the price wanted to purchase these Sun servers and Cisco routers in the dot-com period; that’s cloud income that has a a lot increased chance of disappearing if the firm in query doesn’t discover a market.

This level is related to Nvidia on condition that coaching is the a part of AI the place the firm is the most dominant, because of each its software program ecosystem and the capacity to function enormous fleet of Nvidia chips as a single GPU; inference is the place Nvidia will first see challenges, and that’s additionally the space of AI that’s correlated with utilization, and thus extra sturdy from a cloud supplier perspective.

Those factors a few software program ecosystem and {hardware} scalability are additionally the greatest motive why Nvidia is totally different than Cisco. Nvidia has a moat in each, together with a considerable manufacturing benefit because of its upfront funds to TSMC over the final a number of years to safe its personal 4nm line (and having the luck of asking for extra scale at a time when TSMC’s different sources of excessive efficiency computing income are in a stoop). There is definitely an enormous incentive for each the cloud suppliers and huge Internet corporations to bridge Nvidia’s moats — see AWS’s investments in its personal chips, for instance, or Meta’s growth of and help for PyTorch — however proper now Nvidia has an enormous lead and the frenzy impressed by ChatGPT is simply deepening their set up base, with all of the constructive ecosystem results that entails.

GPU Demand

The greatest problem going through Nvidia is the one that’s finally out of their management: what does the closing market seem like?

Go again to the dot-com period, and the period that proceeded it. The introduction of computing, first in the type of mainframes after which the PC, digitized data, making it endlessly duplicable. Then got here the Internet which made the marginal price of distributing that content material go to zero (with the caveat that most individuals had very low bandwidth). This was an apparent enterprise alternative that loads of startups jumped throughout, whilst telecom corporations took on the bandwidth downside; Cisco was the beneficiary of each.

The lacking component, although, was demand: constant shopper demand for Internet purposes solely began to reach with the introduction of broadband connections in the 2000s (thanks partly to a buildout that bankrupted mentioned telecom corporations), after which exploded with smartphones a decade later, which made the Internet accessible anytime, anyplace. It was demand that made the router enterprise as huge as dot-com buyers thought it is likely to be, though by then Cisco had a bunch of opponents, together with massive cloud suppliers who constructed (and open-sourced) their very own.

There are numerous potential beginning factors to decide on for AI: machine studying has clearly been a factor for some time, otherwise you may level to the 2017 invention of the transformer; the launch of GPT-3 in 2020 was maybe akin to the launch of the Mosaic internet browser, which might make ChatGPT the Netscape IPO. One method to categorize this emergence is to characterize coaching as being akin to digitization in the earlier period, and creation — i.e. inference — as akin to distribution. Once once more there are apparent enterprise alternatives that come up from combining the two, and as soon as once more startups are leaping throughout them, together with the huge incumbents.

However you wish to make the analogy, what’s vital to notice is that the lacking component is the similar: demand. ChatGPT took the world by storm, and the use of AI for writing code is each proliferating extensively and is extraordinarily excessive leverage. Every SaaS firm in tech, in the meantime, is difficult at work at an AI technique, for the advantage of their gross sales crew if nothing else. That is not any small factor, and the exploration and implementation of these methods will burn up a whole lot of Nvidia GPUs over the subsequent few years. The final query, although, is how a lot of this AI stuff is definitely used, and that’s finally out of Nvidia’s management.

My finest guess is that the subsequent a number of years will probably be occupied constructing out the most blatant use circumstances, notably in the enterprise; the analogy right here is to the 2000s build-out of the internet. The query, although, is what will probably be the analogy to cell (and the cloud), which exploded demand and led to considered one of the most worthwhile many years tech has ever seen? The reply could also be an already discarded fad: the metaverse.

A GPU Overhang and the Metaverse

In April 2022, when Dall-E 2 got here out, I wrote DALL-E, the Metaverse, and Zero Marginal Content, and highlighted three developments:

- First, the gaming business was more and more about just a few AAA video games, small indie titles, and the enormous sea of cell; the limiting consider additional growth was the astronomical price of creating prime quality property.

- Second, social media succeeded by advantage of creating content material creation free, as a result of customers created the content material of their very own volition.

- Third, TikTookay pointed to a future the place each particular person not solely had their very own feed, but additionally the place the provenance of that content material didn’t matter.

AI is how these three developments may intersect:

What is fascinating about DALL-E is that it factors to a future the place these three developments might be mixed. DALL-E, at the finish of the day, is finally a product of human-generated content material, similar to its GPT-3 cousin. The latter, after all, is about textual content, whereas DALL-E is about pictures. Notice, although, that development from textual content to pictures; it follows that machine learning-generated video is subsequent. This will possible take a number of years, after all; video is a way more tough downside, and responsive 3D environments tougher but, however it is a path the business has trod earlier than:

- Game builders pushed the limits on textual content, then pictures, then video, then 3D

- Social media drives content material creation prices to zero first on textual content, then pictures, then video

- Machine studying fashions can now create textual content and pictures for zero marginal price

In the very future this factors to a metaverse imaginative and prescient that’s a lot much less deterministic than your typical online game, but a lot richer than what’s generated on social media. Imagine environments that aren’t drawn by artists however fairly created by AI: this not solely will increase the prospects, however crucially, decreases the prices.

I wrote in the conclusion:

Machine studying generated content material is simply the subsequent step past TikTookay: as a substitute of pulling content material from anyplace on the community, GPT and DALL-E and different comparable fashions generate new content material from content material, at zero marginal price. This is how the economics of the metaverse will finally make sense: digital worlds want digital content material created at just about zero price, totally customizable to the particular person.

Zero marginal price is, I ought to word, aspirational at this level: inference is dear, each by way of energy and likewise by way of the have to repay all of that cash that’s exhibiting up on Nvidia’s earnings. It’s attainable to think about a state of affairs just a few years down the line, although, the place Nvidia has deployed numerous ever extra highly effective GPUs, and impressed huge competitors such that the world’s provide of GPU energy far exceeds demand, driving the marginal prices all the way down to the price of vitality (which hopefully can have turn into cheaper as effectively); out of the blue the thought of creating digital environments on demand received’t appear so far-fetched, opening up solely new end-user experiences that explode demand in the approach that cell as soon as did.

The GPU Age

The problem for Nvidia is that this future isn’t notably investable; certainly, the thought assumes a capability overhang sooner or later, which isn’t nice for the inventory value! That, although, is how expertise advances, and even when a cliff finally comes, there may be some huge cash to be made in the meantime.

That famous, the greatest short-term query I’ve is round Nvidia CEO Jensen Huang’s insistence that the present wave of demand is the truth is the daybreak of what he calls accelerated computing; from the Nvidia earnings name:

I’m reluctant to guess about the future and so I’ll reply the query from the first precept of pc science perspective. It is acknowledged for a while now that…utilizing common function computing at scale is not the finest method to go ahead. It’s too vitality expensive, it’s too costly, and the efficiency of the purposes are too gradual. And lastly, the world has a brand new approach of doing it. It’s referred to as accelerated computing and what kicked it into turbocharge is generative AI. But accelerated computing may very well be used for every kind of various purposes that’s already in the knowledge heart. And by utilizing it, you offload the CPUs. You save a ton of cash so as of magnitude, in price and order of magnitude and vitality and the throughput is increased and that’s what the business is absolutely responding to.

Going ahead, the finest method to spend money on the knowledge heart is to divert the capital funding from common function computing and focus it on generative AI and accelerated computing. Generative AI gives a brand new approach of producing productiveness, a brand new approach of producing new companies to supply to your prospects, and accelerated computing helps you lower your expenses and save energy. And the variety of purposes is, effectively, tons. Lots of builders, numerous purposes, numerous libraries. It’s able to be deployed.

And so I believe the knowledge facilities round the world acknowledge this, that that is the finest method to deploy sources, deploy capital going ahead for knowledge facilities. This is true for the world’s clouds and also you’re seeing an entire crop of recent GPU-specialized cloud service suppliers. One of the well-known ones is CoreWeave they usually’re doing extremely effectively. But you’re seeing the regional GPU specialist service suppliers throughout the world now. And it’s as a result of all of them acknowledge the similar factor, that the finest method to make investments their capital going ahead is to place it into accelerated computing and generative AI.

My interpretation of Huang’s outlook is that every one of those GPUs will probably be used for lots of the similar actions which are at present run on CPUs; that’s definitely a bullish view for Nvidia, as a result of it means the capability overhang that will come from pursuing generative AI will probably be back-filled by present cloud computing workloads. And, to be honest, Huang has some extent about the energy and house limitations of present architectures.

That famous, I’m skeptical: people — and firms — are lazy, and never solely are CPU-based purposes simpler to develop, they’re additionally principally already constructed. I’ve a tough time seeing what corporations are going to undergo the effort and time to port issues that already run on CPUs to GPUs; at the finish of the day, the purposes that run in a cloud are decided by prospects who present the demand for cloud sources, not cloud suppliers seeking to optimize FLOP/rack.

If GPUs are going to be as huge of a market as Nvidia’s buyers hope it is going to be, it is going to be as a result of purposes which are solely attainable with GPUs generate the demand to make it so. I’m assured that point will come; what I, nor Huang, nor anybody else might be positive of is when that point will arrive.

…. to be continued

Read the Original Article

Copyright for syndicated content material belongs to the linked Source : Hacker News – https://stratechery.com/2023/nvidia-on-the-mountaintop/

![Au fait, à quoi ça sert vraiment un NAS ? [Sponso]](https://tech-news.info/wp-content/uploads/2024/05/208270-au-fait-a-quoi-ca-sert-vraiment-un-nas-sponso-360x180.jpg)

![Au fait, à quoi ça sert vraiment un NAS ? [Sponso]](https://tech-news.info/wp-content/uploads/2024/05/208270-au-fait-a-quoi-ca-sert-vraiment-un-nas-sponso-350x250.jpg)

![Au fait, à quoi ça sert vraiment un NAS ? [Sponso]](https://tech-news.info/wp-content/uploads/2024/05/208270-au-fait-a-quoi-ca-sert-vraiment-un-nas-sponso-120x86.jpg)